Summary of Sound Localization

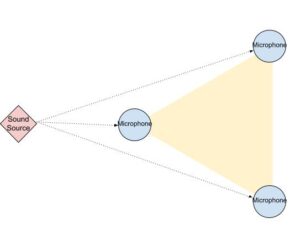

The sound localization project uses three microphones arranged in a triangle to detect the direction of sound by analyzing time delays via cross-correlation. Signals from electret microphones are filtered, amplified, and digitized using a PIC32 microcontroller with DMA and ADC. The system calculates relative timing between microphone pairs to estimate sound direction, displayed on a TFT screen. Debugging is aided by a DAC output. While functional, the system faces issues with phase shifts and filter cutoff frequencies affecting directional accuracy, and improvements like automatic sampling and higher bandwidth amplifiers are suggested for better performance.

Parts used in the Sound Localization project:

- Three electret microphones

- High-pass and band-pass filter circuits

- MCP6242 rail-to-rail operational amplifier

- PIC32 microcontroller

- Three ADC channels on PIC32 (AN0, AN1, AN5)

- Push button switch with pull-up/down resistors

- Adafruit TFT display breakout with SPI interface

- 12-bit, 2-channel MCP4822 Digital-to-Analog Converter (DAC)

- Voltage regulator (linear)

- 9V battery power supply

- SECABB prototyping board

- Resistors and capacitors for filter circuits and button interface

INTRODUCTION

We constructed a triangular arrangement of microphones to localize the direction an arbitrary sound is coming from. By recording input from the three microphones, we can cross-correlate the recordings to identify the time delay between the audio recordings. Since the physical placement of the three microphones are known, the direction of the sound can be estimate using the time delay between the microphones. After estimating the direction we display the direction using an arrow on an LCD display.

Rational

Sound localization is an important part of how people sense what’s around them. Even without being able to see what’s there we can roughly figure out what’s around us based on sound. Attempting to replicate the same system in electronics can prove to be a valuable way to sense the environment for robotics, security, and a range of other applications.

Existing Projects

Sound localization is not an uncommon concept. It’s well characterized that people utilized the time difference between sounds arriving at each of their ears to identify the direction. There have also been other ECE4760 final projects with this idea in mind. There examples are the following:

Sound-Localizing Camera, Sound Triangulation Game, and Sound Targeting (See APPENDIX).

For the first two, the concept was to use a sound’s impulse and some analog hardware to generate pulses to identify the direction. Our approach was to be more like the last project’s idea. However, our intention was to use it to detect an arbitrary sound rather than a continuous tone.

HIGH LEVEL DESIGN

Background Math

The approximate maximum time delay, in sampling frame, between two microphones is computed using the speed of sound in dry air at room temperature, the distance between each microphone, and sampling rate.

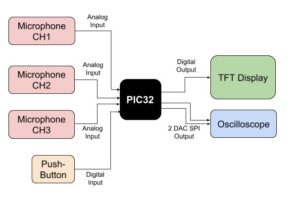

Hardware Block Diagram

The diagram below illustrate all the peripherals used in this project. The PIC32 utilized the 10-bit ADC (Analog-to-Digital Converter) to read from the analog inputs, an SPI (Serial Peripheral Interface) channel for writing to the TFT Display, and another SPI channel to write to a DAC (Digital-to-analog converter) for analog output.

Hardware/Software Tradeoffs

Selecting between hardware and software was a balance of ease of implementation in either while leaning toward running the system in the microcontroller for the increased flexibility provided by the microcontroller. Since we wanted to be able to compare sounds with possible time delays most of the audio processing was done in the PIC32 microcontroller. Although some of the projects we’ve referenced used hardware impulse detectors to determine the time of arrival we opted to do this in software since this would allow us to detect sounds that are not impulses. Some amplification and signal conditional were done in hardware filters and amplifiers since this would be necessary for the ADCs to properly read the input signals and to get rid of aliasing. Inside the PIC32, we decided to have the microcontroller use the DMA channels to pipe the data into a buffer rather than have the processor interrupt at a high rate to sample the ADCs. This allows the microcontroller to do some other processing while the sampling is occurring.

HARDWARE

Overview

The hardware for the project includes 3 microphone circuits, a voltage regulator, a button, a TFT display and a SECABB (see APPENDIX) prototyping board. Each of the 3 microphone circuits include an electret microphone, a set of filters, and an amplifier. Each output of a microphone circuit is fed into an ADC channel on the PIC32. A separate linear voltage regulator is used to provide power to the microphone circuits. The 3.3v power rail from the prototyping board is not used since we’ve found that noise from the microcontroller can find its way into the power rail and get picked up by the amplifiers. Furthermore a 9v battery is used to supply the overall power. We’ve also found that the 5v plug-in wall power supplies tend to have unwanted noise (presumably from some switching frequency). The button is used to start sampling and is simply connected as a pull-up. The internal pull-down on the microcontroller is configured. The TFT display is used to display debugging information and to point in the direction of the sound. The microphone circuits and the voltage regulator were soldered onto solder board. Lastly, a 2 channel 12bit Digital-to-Analog converter was used for debugging. This was used to playback the audio recording and to output the waveform of the correlation. These could be viewed on an oscilloscope.

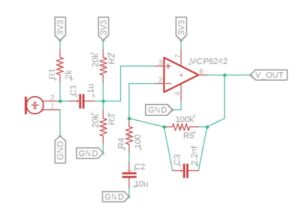

Microphone Circuitry

The microphone circuit consists of three parts. First is the microphone itself. Second is a high-pass filter to remove any DC values from the microphone before feeding the amplifier. Last is the amplifier which is an operational amplifier that is configured to act as a band-pass filter. The schematic for the microphone circuitry is shown below:

The MCP6242 (see APPENDIX) was the op-amp used for the amplifier. It’s a 3.3V compatible rail-to-rail op-amp. The initial high-pass filter has a cutoff of around 160Hz. The high-pass filter on the band-pass amplifier was selected to roughly match the cut-off of the initial high-pass filter. As for the low-pass filter of the op-amp, This was selected to give roughly a 725Hz cutoff frequency. These lower frequency choices were selected due to the original sampling rate we were able obtain with the original method. The gain used was 1000:1. This will turn out to be an issue was will be seen later in the further improvement section. Each output from a microphone circuit was attached to an I/O pin with analog functionality. The pins used were RA0, RA1, and RB3 which correspond to AN0, AN1, and AN5. These were assembled into solder boards such as the following below:

Push Button

The push button circuit is a relatively simple. It’s simply a push button switch connected in series with a 330 Ohm resistor. On one end of the switch circuit is the 3.3V rail and on the other end is a digital I/O pin of the PIC32. The PIC32 is configured to provide a weak pull-down so that when the switch is open the I/O pin reads 0 and when closed the I/O pin reads 1. The switch was originally wired to the protoboard but this turned out cause issues since clicking the switch would cause the assembly to shake. To resolve the issue a different switch was soldered to a length of stranded 24awg wire and then connected in place of the on-board switch. This allows the system to be triggered from a slight distance. The push button switch was connected to RB7 as shown in the schematic below.

TFT Display

The TFT display is used for displaying debugging info and pointing in the direction of the source of the sound. The part we used is an Adafruit breakout that provides the TFT display, a TFT display driver, and an SD card reader (unused). The code for this was a library that was adapted from the library Adafruit supplied for running the TFT with an Arduino. The TFT breakout uses an SPI channel along with a few other digital I/O pins. The library used for the PIC32 can be found linked in the APPENDIX. The pinout for the TFT display is as configured in the SECABB development board. Which is as follows: RB0 ->D/C, RB1->CS, RB2->reset, RB11->MOSI, and RB14->CLK.

DAC

The DAC used is an MCP4822 (see APPENDIX). This is a 12bit 2 channel DAC that we commonly use in the class. The DAC is only used for debugging the system and is not used for the project itself. By sending the waveform to the DAC at a rate of 1kHz we can review what the output of the system looks like. The DAC uses the standard configuration for the SECABB which is a follows: RB4->CS, RB5->MOSI, RB15->CLK.

Further Improvement

For the hardware further improvement would involve reworking the amplifier and removing the push button circuit and have the audio circuits trigger the sampling. First, the range of frequencies for the amplifier’s band-pass filter was selected due to the original system having a low sampling frequency. Since the current system can sample at 25 kHz without an issue and with a few adjustments this can be increased to 40kHz, we can change the cut-off frequency of the low-pass filter. To be much higher to several kHz. However, one issue in that the gain bandwidth product is roughly 550 kHz. At a gain of 1000:1 this turns out to limit the frequency of the system to 550 Hz. It was brought to our attention by Professor Land, that the gain bandwidth product is not well characterized and thus can be quite different between chips. Adding high-pass filter can create a shift in the phase and since each op-amp can be different that means each microphone can get a different shift in phase. Since the cross-correlation relies on checking the phase of the input signals this can mean that the location of the peak is dependent on the frequency of sound heard by the microphones.

SOFTWARE

Overview

In order to maximize the sampling rate of the system, we modified the clock prescaler to achieve the clock frequency to 60 MHz. Nonetheless, to begin the sound localization detection, the pushbutton needs to be pushed to activate the program, and the status of the push button is maintained using a debouncing FSM (finite state machine). First, to locate the direction of the sound, the system first needs to record the reading from each microphone channels, which is done using DMA (direct memory access) to minimize processor usage, and the recorded microphone data are stored in an array. Second, the recording of each channel is cross-correlated with the next channel and identify the peak point of the cross-correlated value with the corresponding relative timing. Third, the relative timing among each pair of channels will be used to compute the direction of the source sound by using the relative direction of timing differences and knowledge of the physical relation of the microphone placement to derive the source of the sound in the three directions. Last, draw the corresponding arrow on the TFT display to prompt result. The following section will discuss each component of the software in details, also including a debugging feature, an experimental method, and further suggestions.

DMA and ADC

The analog input from the three microphone channels is connected to the three ADC channel, which are channel 0, 1, and 5. The ADC is configured to auto sampling mode, which will continuously sample the next data when the previous conversion is completed, and the sample size is three channels and converted to a 16-bit signed integer. The recording from microphones was accomplished using DMA, specifically three DMA channel for three microphone channels. For each channel, the DMA was initially configured to transfer from the microphone data from an ADC buffer to the recording arrays, and the entire block size of each transfer is the size of the recording array, which is set as the sampling rate times a tenth of a second. Once the DMA channels are enabled by the function calls, the DMA will transfer cells of size 16 bit at a rate set by the Timer2 interrupt, which is configured as sys_clock/sampling_rate=2400 clock cycles. When the entire block is transferred, the DMA channel will raise the DMA_EV_DST_FULL flag to signal completion of the transfer. Further, the computation_thread checks the completion flag for all three DMA channels to begin the computation of the sound localization.

Push Button

The button_thread continuously read the input of the push button and using the button debouncing FSM to update the current status of the push button. The FSM was used to properly capture the full push of the button. Button presses toggle the ready flag to signal the computation_thread to begin the computation with DMA transfers. The following diagram illustrates the FSM.

Cross-Correlation

Once the microphone data is fully recorded in the arrays, cross-correlation will be calculated on each pair of microphone recordings. The cross-correlations are calculated on channel 0 with channel 1, channel 1 with channel 2, channel 2 with channel 0. In the cross-correlation computation, the value of the recording at each channel add in the constant DC bias for each microphone channels, which were measured independently for each channel. Anyhow, each cross-correlation is computed by sliding the middle ¾ section of the first recording fully along the second recording and compute the sum of dot products of the fully overlapped recordings, and the resulting cross-correlation values are stored in an array of size ¼ of the recording size. The size of the sliding window was chosen after experimented with multiple windows size, and this size allows a large amount of recording values to be used to find correlation among the two channels and enough cross-correlation data to fit the maximum amount of time shifting among the recording constraint by the physical distance of the microphones. As the cross-correlation values are computed, the peak value and its index value of each of the three pairs will be identified and recorded to compute the direction of the source sound.

Computing Direction

The direction of the source sound is computing using the three peak indexes detected during the cross-correlation calculations. A 3-bit encoding is used to determined the direction. Each bit of the encoding is corresponding to if the peak index is positive or negative, which when the index is positive that means the first recording occurred prior to the second recording so the microphone of the first recording is closer to the source of sound than the microphone of the second recording. The encoding is constructed by the following assignment.

encoding = (peak_index[0]>0)<<2 | (peak_index[1]>0)<<1 | (peak_index[2]>0);

Next, the 3-bit encoding is used to determine the direction of the sound using the following logic, which determined direction as the microphone channel that receives the signal prior to both of the other two microphone channels.

if (encoding ==0b100 || encoding ==0b110){

direction = 0;

}else if (encoding ==0b010 || encoding ==0b011){

direction = 1;

}else if (encoding ==0b001 || encoding ==0b101){

direction = 2;

}The result direction will be displayed on the TFT display by drawing an arrow pointing to one of the three microphones.

DAC (For Debugging)

Two DAC channels were configured for the purpose of debugging the system. The DAC has configured to output the cross-correlation data through SPI that continuous streaming data out at a rate set by the Timer 3 interrupt, which was configured at 60 kHz. The output pins were connected to the oscilloscope in order to observe and to analysis the cross-correlation signals, which are repeatedly streamed out the latest compute cross-correlation array.

Another Method Tried

The first method we attempt at the sound localization computation was continuously calculating the cross-correlation of each microphone channel with the pre-recorded recording. Hence, due to the very tensive demand of computation continuously on the fly, the sampling rate is very restricted. The highest sampling rate we reached that withstand the runtime, 60 MHz, of the microprocessor was 3 kHz, which was too low to have a reasonable resolution to identified timing delay between the microphone channels. The results obtained from this method can’t identify a consistent correlation that follows the theoretical intuition.

Pseudocode Snippet

The following are pseudocode for each function and thread. For the full source code, please check the APPENDIX.

// Timer 3 Interrupt Service Routine

void __ISR(_TIMER_3_VECTOR, ipl2) Timer3Handler(void) {

mT3ClearIntFlag();

transfer_to_DAC_Channel_A();

transfer_to_DAC_Channel_B();

}

// Compute the direction of the source sound

// draw result on TFT Display

void compute_direction() {

// assigned encoding

encoding = (peak_index[0] > 0) << 2 | (peak_index[1] > 0) << 1 | (peak_index[2] > 0);

direction = check_cases_identified _direction(encoding);

draw_TFT(direction);

}

// cross-correlate each pair of microphone recordings

void cross_correlate() {

for (each channel) {

for (shift range) {

for (index range) {

correlate_value += mic(channel) + biase * mic(next channel) + biase;

}

Update_new_peak_value();

}

}

}

// the thread waits for button push to record and call

// cross_correlate and compute_direction

static PT_THREAD(protothread_computation(struct pt * pt)) {

PT_BEGIN(pt);

while (1) {

wait_until_button_pushed();

Reset_button;

Star_DMA_transfer(All_3_channels);

wait_until_all_DMA_complete();

//cross_correlation the new recordings

cross_correlation();

//compute and display direction

compute_direction();

}

PT_END(pt);

}

// This thread update and maintain the state of the push button

static PT_THREAD(protothread_button(struct pt * pt)) {

PT_BEGIN(pt);

while (1) {

button = Read_Button_input();

// FSM for the button press debouncing

transition_case_for_button_state();

}

PT_END(pt);

}

// Main Function. Configure and initialize Timers, DAC, ADC, DMA, TFT, Pins, Threads.

void main(void) {

// Configure timer3 interrupt

Config_Timer3(sys_clock / 1000);

// Configure timer 2 interrupt

Config_Timer2(sys_clock / sampling_rate);

/// SPI setup for DAC

Configure_SPI_for_DAC();

//Configure and enable the ADC

Enable_ADC_for_3_Channels();

// DMA Configure for 3 channels

Enable_3_DMA_Channels_on_Timer2_and_Raise_DONE_flag();

// set up i/o port pin

set_digital_input_and _pulldown(push_button_input_pin);

// config threads

PT_setup();

// setup system wide interrupts

INTEnableSystemMultiVectoredInt();

// init the threads

initialize_threads();

// config and init the display

tft_config();

// round-robin scheduler for threads

while (1) {

PT_SCHEDULE(protothread_button( & pt_button));

PT_SCHEDULE(protothread_computation( & pt_computation));

}

}

RESULT

The sound localization works well, and the computation delay is very minimal to notice. The device works best when the system is able to catch the valid section of the sine sweep that is within the bandpass range of the microphone circuitry, so the frequency characteristic of the recordings are fully captured for comparison. This meant that the accuracy of the system is dependent on the accuracy of the user who clicks the button and dependent on the op-amps used for the system since this will determine where the upper cut-off is. The following images were captured while testing the device.

The upper trace on the two shows recorded signal from one of the microphones and the bottom trace shows the cross-correlation result from two of the microphone channels. The cross-correlation trace uses the high signal to show when the data starts and ends. This allows us to see where the start and end of the cross-correlation are along with the location of the peak. In the first image, the peak is clearly shifted to the left indicated that one of the recordings is leading the other recording while in the other one the peak is centered showing that the two recordings are roughly at the same time. While the system was usually correct there were issues with consistency. The reason the scope of the project was reduced to detecting the direction in aligned with each microphone was that the readings were not consistent and were not quite correct even when they were. This may be an issue to do with the microphone circuits. The low-pass filtering caused by the gain-bandwidth product of the op-amp may be causing the microphones to see different phase shifts on the input signals. We believe this to be the case since the peaks of the cross-correlation come back as clean waveforms like those depicted above but the peak would be located in the wrong part. This would indicate an issue with either the microphone circuits or the sampling. Since the CPU clock is operating at 60 MHz, and ADC is sampling in the MHz range as well we generally aren’t concerned about the delay between ADC samples or the DMA copying the data sequentially into the buffer. This would leave just the microphone circuits causing some undesired phase shift in the input signals. To make matters worse since the phase shift difference could be frequency dependent and the test signal was a linear sweep through frequencies this meant that the results of the sampling and cross-correlation would be dependent on the user’s ability to push the button at the right time in the frequency sweep. This is not reliable since most people can’t catch the right time accurate to less than a 1/10th of a second so it would be better if the system could also trigger the sampling on its own since this would be more consistent.

On the other hand, there weren’t really any safety concerns with the project. Since the device simply records audio signal and returns a direction on a display, it doesn’t have any moving part and it doesn’t emit/radiate any electromagnetic wave, any acoustics wave, or any energy output that could be particularly harmful or interference to the surrounding environment and living beings.

With regards to usability, the device needs quite some work. First, removing the push button would be a crucial change that would improve the usability of the device since currently, it requires a properly timed button push this would require the user to be accurate. Second, expand the frequency range of the bandpass circuitry to allow the device to detect a wider range of acoustic signals. Last, using more microphone channels might improve the detection resolution of the sound direction. Thus, there are many aspects of the device that required further development in order to fully take advantage of the device.

CONCLUSION

The final design and original design did not meet the expectations originally set for the projects. As a result, the scope of the project was scaled back to pointing towards the closest microphone. The primary issue we ran into was that the system wasn’t giving particularly consistent results from the relative time delays between each pair of microphone channels. For the most part, the system would resolve the correct microphone, but the inconsistency meant that it pointed in the wrong direction several times. Originally, we wanted to be able to resolve a direction at a much higher resolution, ten degrees. It seemed that the cross-correlation code and indexing of the peak in the code appear to give the correct results. The cross-correlation waveform looks like the theoretical expectation, but it has the peak in the wrong place occasionally. The code that returns the index of the peak also appears to give the correct index based on the observed peak of the waveform. Thus, the source of the misleading inconsistency mostly likely is not from the computation but rather the microphone circuits. As noted in the results section, the chosen cut-off frequencies for the filters in the microphone circuits were not the correct frequencies to choose for the range of sounds that we would like the system to operate. Furthermore, the low-passing of the op-amp’s gain bandwidth product could also be leading to the circuits applying different phase shifts to the audio. If we were to redo this project, we’d likely reduce the gain and chose an op-amp with a higher gain-bandwidth product. This might reduce or eliminate the variation we’re getting with the correlation peaks. Furthermore, we’d chose a different cut-off frequency for the low-pass filter which would allow our project to work with a higher range of frequencies. Having the system trigger sampling on its own might also help with reducing the inconsistency that we were seeing. The system does not have any major safety concerns. The project simply takes audio readings and attempts to determine the direction the audio came from. The only output is displayed on a TFT display. The TFT display is not bright enough to be damaging to people’s vision. With regards to privacy, the audio recordings are limited to one-tenth of a second in duration and they are discarded once the next sample is taken. While it is possible in theory to extract the audio from the DAC channel that’s being used for debugging, a finalized version would have this debugging feature removed. As for ethical considerations, all referenced used in construction this project are noted and linked if applicable. Our code is based on examples given in Cornell’s ECE4760 course website. The pages for the ECE4760 course references are linked in the APPENDIX and the linked pages also contain the example code that our code uses as a basis. Our system does not cause any issues with legality as it does not transmit any radio wave and is also not intended to go on a vehicle.

Source: Sound Localization