Week 01

August 15, 2010 (2 hours):

Met as a team after class to finish writing preliminary project proposal.

January 17, 2010 (2 hour):

Brained stormed the possibilities of developing a multi-touch table. The Multi-touch table would require an external camera as an interface for the Computer Vision Algoithm. I researched that algorithms that fall under Image processing and Computer Vision such as blob tracking and real-time contour tracing can be used to track fingers on the multi-touch surface. After studying the feasibility of the project, I was positive that the project would work and would be promising.

WEEK 01 SUMMARY

Accomplishments: Submitted preliminary project proposal.

Weekly Work Total: 4 hours

Project Work Total: 4 hours

Week 02

January 19, 2010 (2 hours):

Met as a team to formulate PSSC for presentation in class on September 2.

January 20, 2010 (1 hours):

I worked on the class PSSC1 presentation. I also worked on laying out the rought draft of our PSSC’s.

Met as a team to finish the final project proposal. Met with Chuck and got the Intel Atom board. The Intel Atom board was packaged in a black box. Me and Abhisheyk will try to install Windows Embedded XP SP2. I also personally researched the main difference between Windows Embedded XP and normal XP Professional edition. This is important because we want to know whether we can use service packs such as Microsoft Visual Studio 2008, OpenCV and other Computer Vision libraries. From what I researched, the Embedded XP differs in the following ways : 1. Embedded XP does not support Windows File Protection (WFP). WFP prevents system files from being overwritten unless the files that are being installed are digitally signed by Microsoft. We do not require this feature to implement the hand tracking feature in C++/OpenCV 2. Embedded XP does not support XP tour (which is useless anyways) 3. Embedded XP does not support the feature to Add/Remove programs option in the Control Panel 4. Embedded XP also does not have online activation. This is fine since we are going to obtained that while downloading XP on the Atom board 5. Embedded XP does not support Windows Update, Image Acquisition file and MSN Explorer. We can live without it for sure. Taking into account my research, I concluded that we can safely proceed with whatever we were going to do with Intel Atom

January 23, 2010 (5 hours):

I worked on getting the camera to work with our proposed design. The camera in use had to support IR filtering. We have a PS3 eye camera. This camera is the one usually used by gamers to play PS3 bsed online games. This camera is of high resolution with the following specs –

1) 680×450 pixel resolution

2) Wider angle for viewing and

3) 30 fps

The high resolution precisely tracks the finger blobs effectively.The high frame per second will ensure that we will have a good chance to perfect our blob detection. I basically hacked into the Ps3 eye to facilitate IR based tracking. To do this, I removed the IR filter from the PS3 eye. This was the first attempt and it had to fail. I am ordering another PS3 eye camera to play around with. Hopefully this one won’t break.

WEEK 02 SUMMARY

Accomplishments: PSSC and project proposal finalized. Worked on getting the Sony PS3 eye camera to work with our system (not working properly yet). Installed Win XP and setup of other vital features on the Intel Atom board. Evaluated Embedded Win XP to implement the Image Processing part

Weekly Work Total: 15 hours

Project Work Total: 19 hours

Week 03

Jan 26, 2010 (8 hours):

I met with Abhisheyk to to mainly work on developing the top level framework for the Computer Vision based algrithms and its relationship with the Graphical User interface we were about to build in the weeks to come. I researched over some of the haar classifiers in the Computer Vision literature to get a feel of Image Processing and Vision systems. I looked up another algorithm namely the Lucas Kanade algorithm to get a feel for tracking algorithms.

We have decided to exchange information between the Vision module and the GUI interface via the TUIO interface. TUIO interface was developed specifically to optimally transfer information related to blob tracking in Multi0-touch applications since the advent of the technology. TUIOis a communication protocol built to lead with multitouch technology, using OSC protocol to work. it is one of the most important elements in this kind of project, because it allows you to build multitouch applications using several kinds of technologies. TUIO sends the funger tracking data on a server on port 3333. The client application (anything ranging from Java, Processing, Flash etc) can read onto this port and use the data for constructing the user interface. This is an illustration :

I also researched about the techniques to accomplish Total Internal Reflection on the touch table surface. This is important because the camera can see only the points where the total internal reflection breaks on the surface. So for example, when a user touches on the table-top, the total internal reflection on the surface would break at that point and the camera sees a “blob” due to this phenomenon.

Later in the night, I struggled for a couple of hours on trying to install OpenCV along with the OpenFrameworks. After a lot of frustration, I figured out that the Embedded Win XP Visual Studio lacked the following DLL files : MSVCP17.dll and MSVCR1.dll. Error was fixed and OpenCV/OpenFrameworks runs in the Visual Studio enviroment.

Jan 27, 2010 (3 hours):

I worked further on hacking into the PS3 eye camera. We want to make sure that we do not have to order another camera. This is sequence of operation I followed to remove the IR filter from the camera (Thanks to AlexP, [email protected]):

Jan 28, 2010 (5 hours):

I met with Abhisheyk to buy Graphics LCD. We researched for quite a while before purchasing the OLED from 4D-Systems. The LCD has an in-built touchscreen module and the dimensions are 2.4″ display. The OLED is ultra-thin and low power.

AS a team, we met with David and Prof. Mark Johnson to clarify and discuss about our PSSC’s. We were later joined by Malek who gave us helpful advise about choosing an appropriate Bluetooth controller

Jan 29, 2010 (3 hours):

First, we met Malek to discuss about which microcontroller to use for our project. He suggested using Freescale over PIC and Atmel. We decided to use 9S12 Freescale for our project. Later in the afternoon, we charted our design (given in Presentations) to employ two Freescale microcontrollers to implement the design

WEEK 03 SUMMARY

Accomplishments: OpenCV and other Image Processing tools succesfully setup. TUIO and flosc successfully setup/initialized. Got the PS3 eye camera to finally work as per our requirements. Errors with the Visual Studio environment fixed. Decided upon using Freescale 9S12 microcontroller

Weekly Work Total: 19 hours

Project Work Total: 38 hours

Week 04

February 2, 2010 (6 hours):

Met with the entire team to complete the Design Constraint Analysis. I also researched way to “keep” the IR rays from escaping the plexiglass. Found a forum which had a tutorial to make a “silicon rubber” sheet on a blotting paper. This will hopefully leads towards a succesful Internal reflection.

February 4, 2010 (8 hours):

We had already obtained all the parts to constuct the table. We decided to make the table in my Apartment since the size is to big to fit in the Senior Design lab. Me and Abhisheyk had purchased the plexiglass, the metal rods and other hardware from Menards a few days ago. I also came to know that the total internal reflection would not break if we just keep the plexiglass as it is on the table. This is mainly because the glass allows the passsage of IR rays and thus there is no internal reflection. I had been looking into addressing this problem yesterday. Me and Abhisheyk went to Wallmart the other day to buy Silicone, Xynol and other essentials to make the magical “rubber sheet”. A detailed description of how the table was constructed along with the installation of IR LED’s and the “silicon rubber” sheet is as follows :

1. The first step was to construct the table using the metal joints and rods. After a few painful hours, we managed to hold the table on the ground. These are a few sample pictures I took in the process of developing the tabletop.

2. In between constructing the table, I started mixing up the ingredients to make the magical “silicon rubber” potion. We first used a plastic glass for the mixture, but it melted because of the combination of silicone and xylol. We got a painter’s plastic jar to perform the mixing. A 1:1 ratio of Silicone and Xylol was stirred for about 15 minutes. A blotting paper was taped onto the wall. A painting brush was used to apply the mixture onto the blotting paper. Approximately three layers of this mixture was put on the paper. The procedure was completed after drying the paper for about 2 hours. These are some of the illustrated photos of the entire procedure :

February 5, 2010 (2 hours):

Helped Himanshu complete the homework for that week

WEEK 04 SUMMARY

Accomplishments: Constructed a working Tabletop. Developed Silicone rubber sheet to allow Total Internal Reflection. Helped Himanshu in Presentation and Homework

Weekly Work Total: 16 hours

Project Work Total: 44 hours

Week 05

February 8, 2010 (7 hours):

I was experimenting with using Processing from MIT to use it as a GUI for our application. Processing is a computational art toolkit from MIT. It also supports OpenGL and a custom 3D Graphics API (P3D). Processing is natively written in Java but has its own development environment. Since we were going to write the USB drivers in Java, I ported Processing and added all the *.jar files of Processing in Java. I could compile Basic Processing code in a Java Applet. I also proceded to write a custom TUIO Port Listener. This small program recognizes finger activity on our table and draws it onto a Java Applet. This is a sample picture of the applet running:

Each finger blob has a unique Identifier. To develop a GUI app, my custom interface can act as an API to be called. The API would return the current blob on the table (via an ID number) along with the co-ordinates with respect to the resolution of the projector.

February 10, 2010 (5 hours):

I got the PIC24F development board from Chuck. I tried playing around with it. There were some library problems which were later on resolved. I tried going through the sample programming tutorial and running my first PIC program on the development board to display characters on the on-board LCD. Completed PADS tutorial

WEEK 05 SUMMARY

Accomplishments: Initial GUI development on the Intel Atom side. Started development tutorials on PIC24F dev board. Ran sample programs and developed an API in Processing for the Blob tracking mechanism and TUIO protocol.Completed PADS tutorial

Weekly Work Total: 12 hours

Project Work Total: 56 hours

Week 06

February 15, 2010 (2 hours):

Met with Abhisheyk and tried interfacing the OLED LCD with 9Sc12 microcontroller to see if it works. Development in progress…

February 16, 2010 (4 hours):

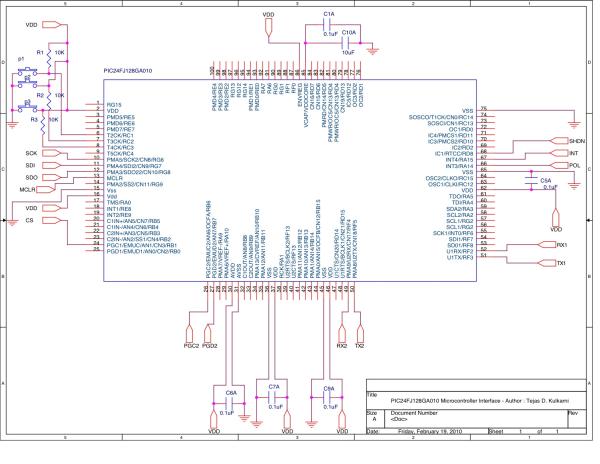

I worked on the TSSC presentation and the preiminary schematic. The preliminary schematic is nowhere close to what the circuit will look like. It is a mere depection of the top level diagram and a reference for what I will working on tomorrow

February 18, 2010 (10 hours):

I pretty much spent all day in the lab. Earlier I worked on talking to David about the issues we were getting into with the Bluetooth. Earlier that day, I talked to Chuck and tried searching for the problem without any luck. David helped in fixing the problem after he identified that the problem was the varying voltage levels in the SCI communications. All we had to do was stick the Rx and Tx directly from the microcontroller to the Bluetooth module (wasted 3 nights because of our stupidty).

I also worked on doing the entire schematic of our PCD in OrCAD. Currently the schematics completely represent the entire circuit (with the possibilitiy of minor bugs in the power supply).

The PIC24F microcontroller is specified to run under a voltage range of 2V to 3.6V. The normal mode of operation is 3.3V. The low dropout regulator circuit provides the +3.3V for operating the microcontroller.In the proposed design, this device will interface with several digital peripherals – the battery gauge monitor, the Graphical LCD, Bluetooth module and the SD/MMC breakout. The design utilizes two UART modules, one SPI module, three digital pins for battery gauge monitoring and three digitals pins for pushbuttons. To program the microcontroller via Flash memory, a complete pinout is provided along with the necessary circuitry as per the microcontroller datasheet. I provided 4 pinouts for the flash programming – MCLR, PGD2, PGC2 and other power ground connection as specified in the datasheet for the microcontroller

The DX160 Graphical LCD used in the circuit takes a 5V supply voltage and can accept commands via a standard RS-232 or a TTL signal pins. The LCD can be connected directly to the PC RS-232 transmitter pin for easy debugging. The TTL signal pin cannot be directly interfaced with the PIC24F Transmitter pin (U1Tx). The PIC24F transmitter (UART) pin operates within the range of 0V to 3.3V. I found a Intersil CD40109BMS Low-to-High Voltage shifter to translate the 3.3V on the transmit(Tx) pin of PIC24F to 5V for the LCD’s receiver (Rx) pin. It is important to note that the LCD does not have a transmit (Tx) pin due to the absence of any acknowledgement support from the LCD module. The LT1129-5 regulator provides the essential power to drive the Bluetooth module. The Bluetooth module is interfaced via the UART interface with the PIC24F microcontroller as shown in my schematics.

February 19, 2010 (12 hours):

A consecutive all nighter in the lab – I worked on interfacing the SD Card with our design. I started interafacing it directly with the PIC24F. Earlier, me, Abhi and Ankur relied on using the 9S12 microcontorller. I soldered some pinouts on the Extension Card slot of PIC24F to interface it with our peripherals. I connected the SD Card according to the data I obtained from SanDisk SD card manual. I studied the format in which to read and write from the SD Card. I interfaced the card by placing it into a SD card Sparkfun card slot we purchased. Since the voltage levels of PIC24F and SD Card matched with each other, there was no use of any additional circuitry for voltage management.

To interface with the SD card, the SD has to be first initialized to operate in the SPI mode. I succesfully accomplished this since I got an acknowledgement from the card. I also tried reading 512 bytes of dats (default) from the SD card succesfully. However there were problems when reading. This is probably because I need to send CRC checksum as the last byte. The CRC calculation has the SD card Manufacturer’s ID. I guess I am doing something wrong in computing the CRC. To verify, I hooked up Oscilloscope probes to DI and DO on the SD card slot to monitor the SPI communication. Look at the following illustrations :

2. Here is the Inputs and Outputs obtained from the DO and DI from the SD Card breakout. The pictures showing one waveform represents the data going from Micro (DO) to SD Card (DI). The pictures illustrating two probes follow the following conventions – the signal at the top is from micro to SD card while the signal below is from the opposite. I verified to see if the signals were being sent the way they were supposed to be sent (and it was correct). Also note that the pictures are inverted horizontally. You can see in one of the pictures how the SD card responds after a few clock cycles once the PIC sends data commands of the output line (top signal)

For more detail: Tejas Kulkarni’s Lab Notebook